AI technology is advancing rapidly, and those who don’t follow the latest trends may be surprised by some of the new possibilities AI brings.

We decided to do our own experiment and see if internet users are able to pick up clues and recognize photos, artworks, music, and texts created by Artificial Intelligence.

What followed exceeded our wildest expectations.

In some survey groups, as many as 87% of respondents mistook an AI-generated image for a real photo of a person. Only 62% of respondents interested in AI and machine learning managed to answer more than half of the questions correctly. Among the remaining respondents, more than 64% were wrong most of the time.

“Well, that’s it then. AI will rule us all!”—one of our respondents commented upon seeing the results.

But will it though?

Take the AI Test

You can still take the test but your results will not be included in our study.

You’ll get your score at the end (it won’t ask for your email).

Good luck!

You can check the correct answers here.

Use AI chatbot to start human-like conversations with customers

Read on to find out more about the results of other participants.

A 2-Minute AI Primer

Let’s start with the basics.

In case you missed or happened to fall asleep during your AI deep learning classes at school—don’t worry. For some reason, I have no recollection of them either.

Here is a quick refresher on some core AI concepts.

Artificial Intelligence vs Machine Learning and Deep Learning:

- The term Artificial Intelligence has the broadest scope. It refers to any type of technology that appears to “make its own decisions.”

- Machine Learning is one of the methods used in advanced AI solutions. ML algorithms self-improve automatically by analyzing more and more data.

- Deep Learning is a type of machine learning that uses artificial neural networks.

Most of our examples use Generative Adversarial Networks—a deep learning technique.

GANs have two networks that try to outsmart each other. Imagine two AIs playing charades.

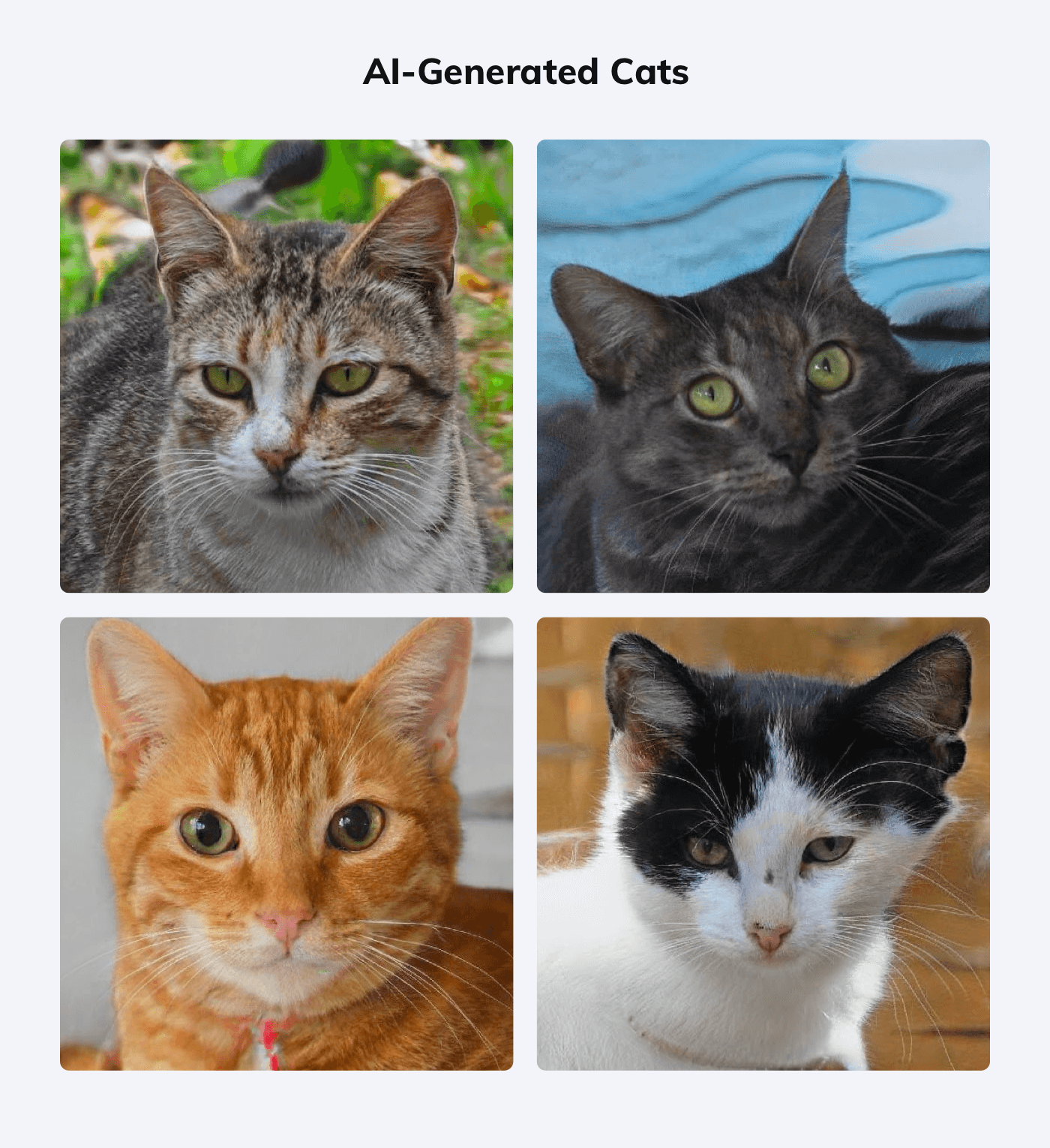

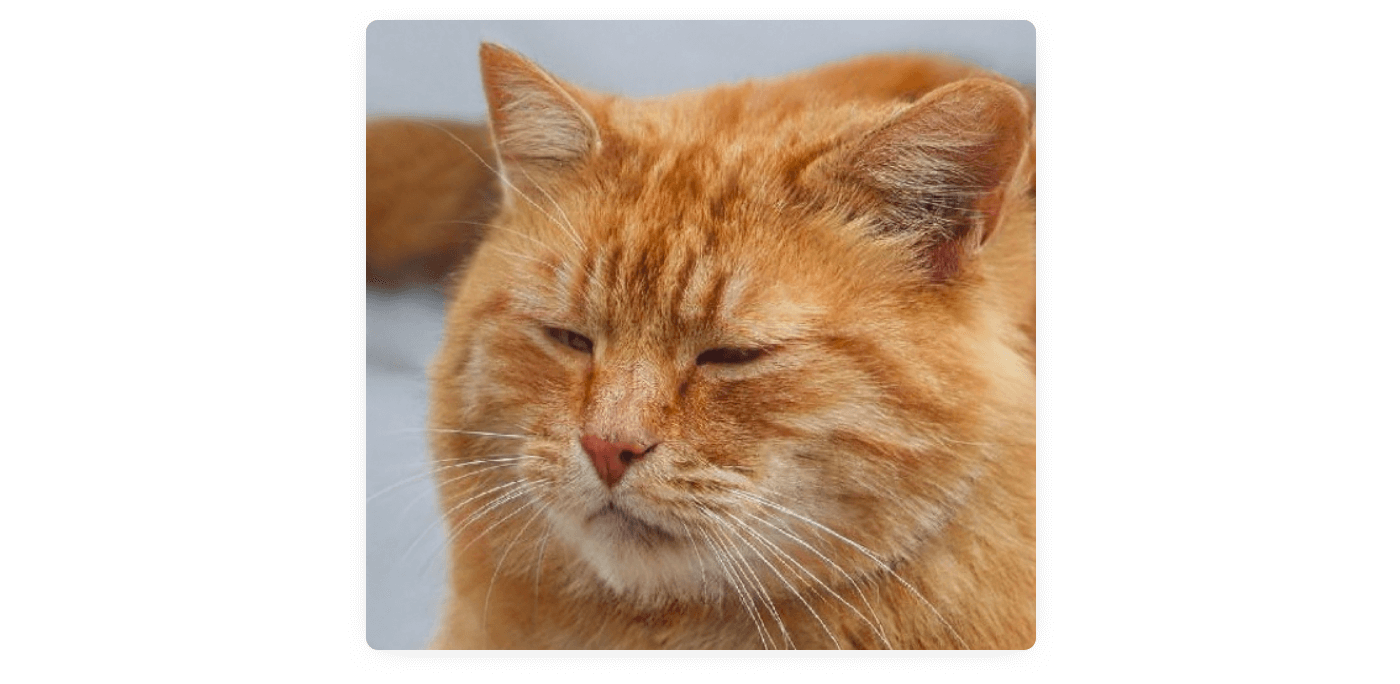

The networks are known as generators and discriminators. For example, they can analyze a huge database of cat photos. Afterward, the generator creates a random image of a cat. The discriminator evaluates if it looks real enough. And they are getting better and better to the point when we get this:

These images are quite convincing, aren’t they? But if you take a closer look, you’ll notice that the tails, collars, and backgrounds are all messed up. That’s because in the original database some cats wear collars, some don’t. The AI tries to cover all bases and creates a mashup with a visible trace of a collar that blends with the fur.

Now—

Everything seems obvious once you know what to pay attention to.

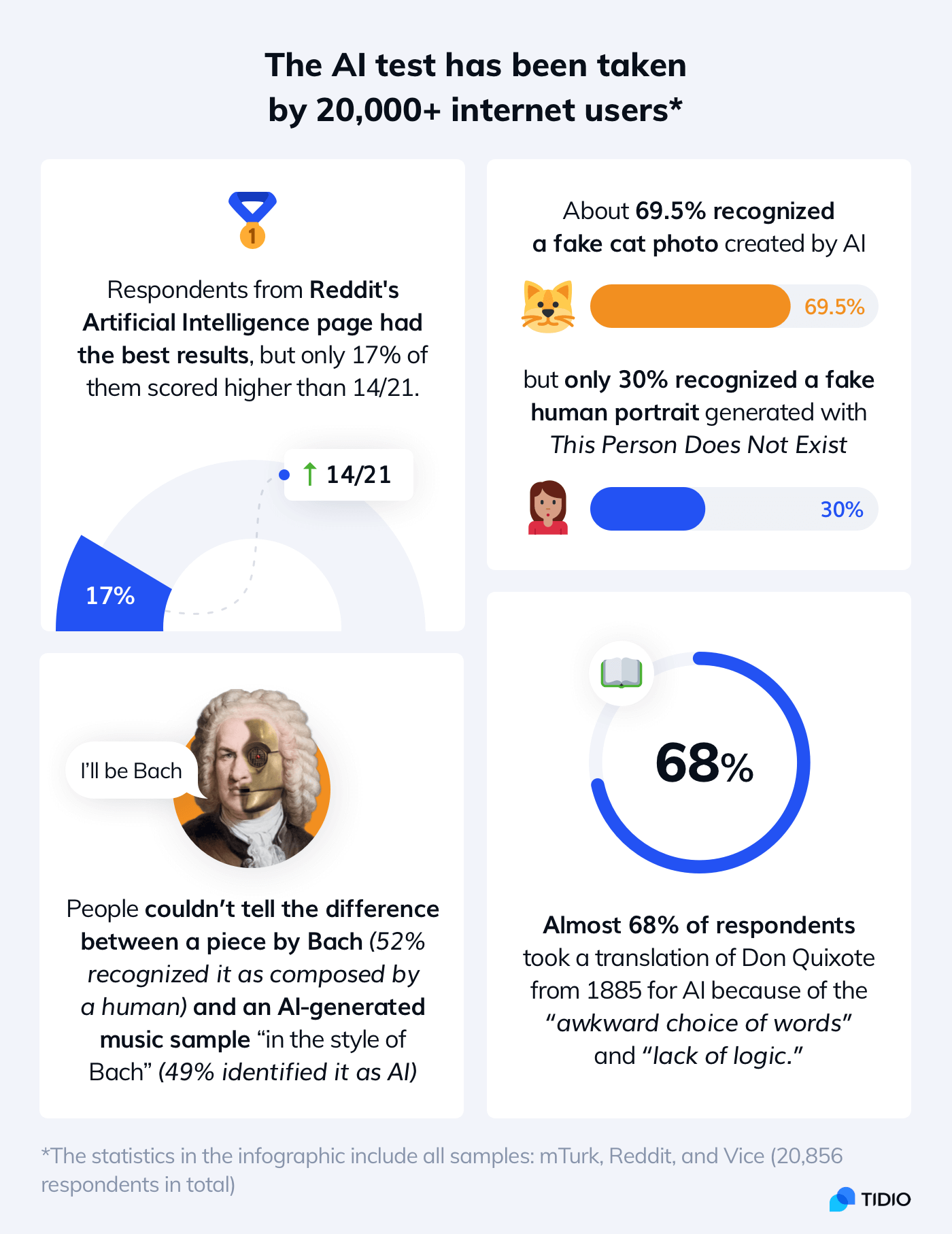

In our survey, it turned out that respondents were better at identifying fake cats than fake people!

Almost 70% recognized an AI-generated cat photo but only 32% correctly identified one of the AI-generated people (we’ll come back to that later).

Let’s see how our respondents managed to tell if something is real or created by AI.

Major Findings of Our AI Test

A typical response to the survey was:

I am so confused, I feel like I don’t know anything anymore. Is this real life?

But it seems a little bit excessive.

Here are some of the most interesting things that we discovered:

Respondents who felt confident about their answers had worse results than those who weren’t so sure

- Survey respondents who believed they answered most questions correctly had worse results than those with doubts. Over 78% of respondents who thought their score is very likely to be high got less than half of the answers right. In comparison, those who were most pessimistic did significantly better, with the majority of them scoring above the average.

Two-thirds of people who think they would recognize a chatbot struggle with identifying AI-generated texts

- The majority of our survey respondents believe they would recognize if they were talking to a human or AI online. However, even those very confident were only able to identify the samples correctly 34% of the time. While most internet users say they prefer to talk to a human rather than a chatbot, it’s possible that soon they may not be able to tell the difference.

About 24% of male and only 17% of female respondents made less than 3 mistakes when judging photos

- Male respondents did slightly better at photographs. Female respondents had a better ear for catching the human element in music. Interestingly, non-binary respondents were most successful and performed better in all categories. More than 37% of respondents who declared as non-binary made less than 3 mistakes when judging photos (out of 7 examples). However, this correlation can be linked with age—there are significantly more Gen Z respondents not identifying themselves as exclusively male or female.

Younger respondents are more likely to recognize AI-generated images than Millennials, Gen X, or Baby Boomers

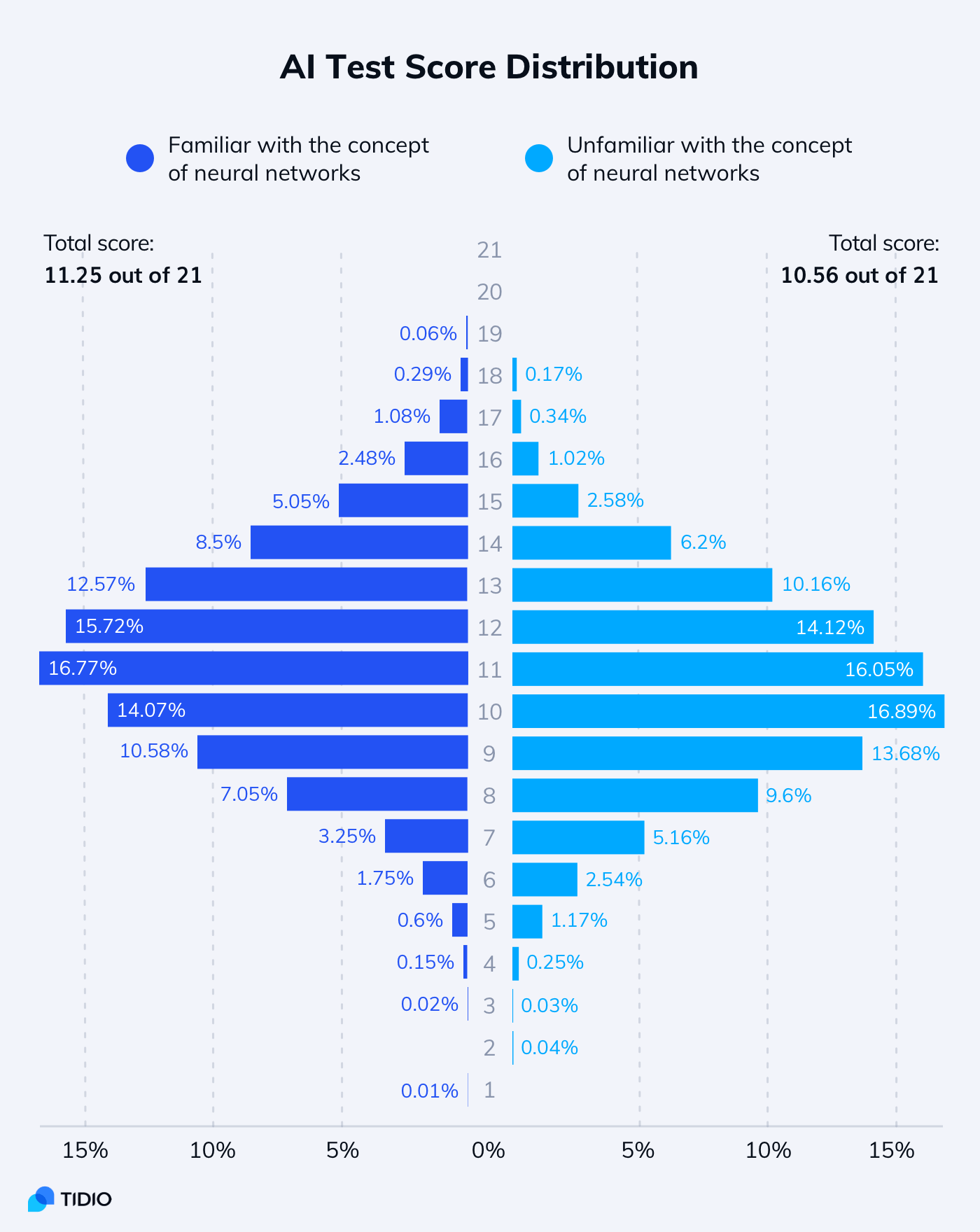

- The total average score was highest among Gen Z respondents (11.5 out of 21) and lowest among Baby Boomers (9.0). The results were similar in most categories but the younger respondents did much better in evaluating the photos. Additionally, people interested in AI technologies and acquainted with terms like neural networks performed better than people who were unfamiliar with these concepts (scroll down for details).

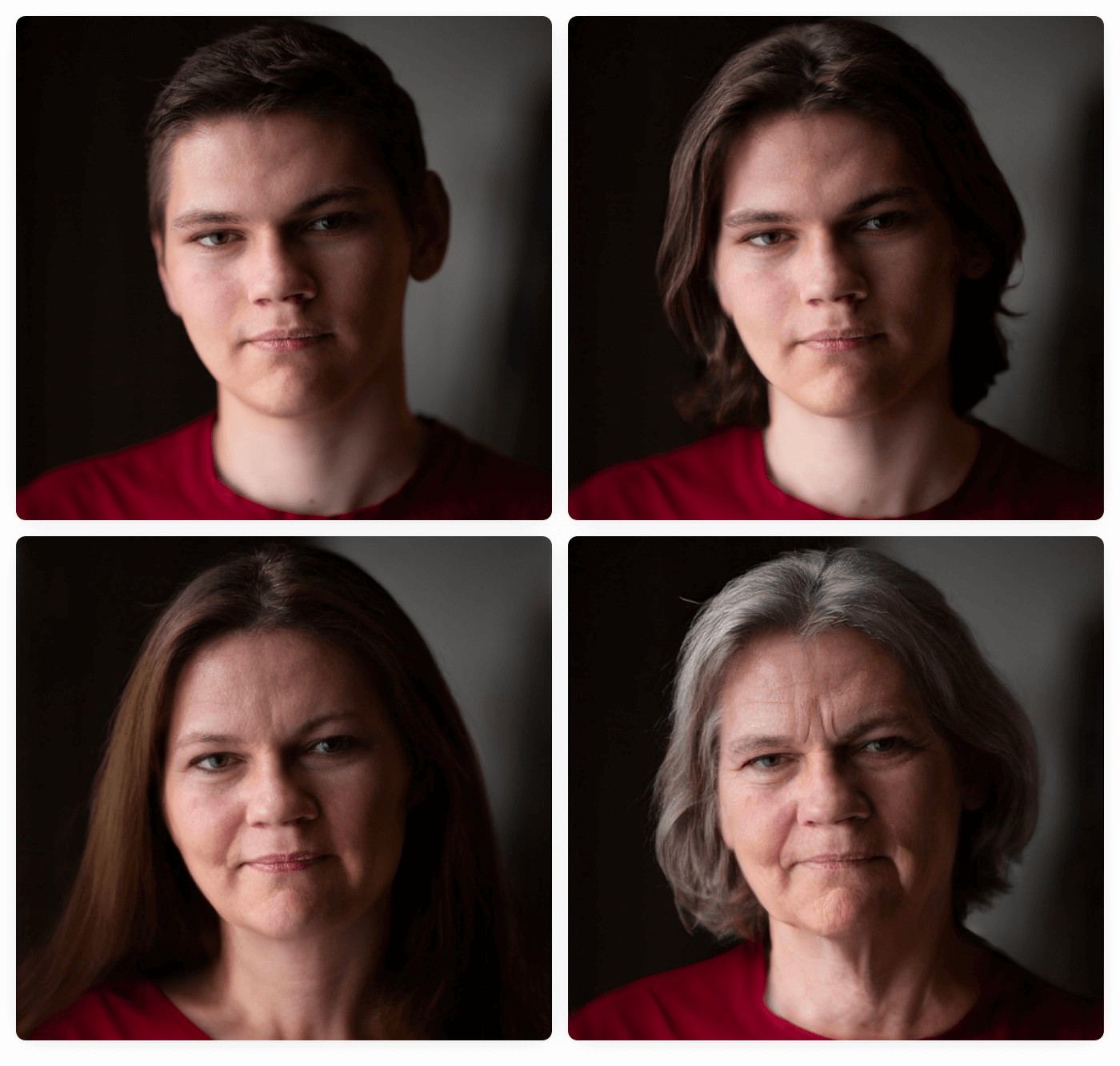

AI-modified images of people are more difficult to recognize than images generated by AI from scratch

- Some AI-generated examples were recognized correctly by more than 64.3% of respondents. However, AI filters applied to real photos turned out to be more tricky. Only 1 in 5 respondents was able to point out the original photo among the ones with age and gender changed by AI.

Human vs AI: Detailed Results

Familiarity with AI technology proved to be the most important factor in the test score.

Below is the distribution of scores for people interested in AI vs the rest.

A significant number of our respondents were surprised by the difficulty of the test.

If we look at the individual questions, the responders were usually right or very divided. In some examples, they were way off. Winning streaks were extremely rare. Even if we excluded the samples without obvious hints, everyone made a mistake at some point.

It’s kind of scary, yet fascinating, how the lines between what a human can create and what AI can generate are blurring as these systems learn more.

FalseRelief

A respondent from Reddit

Let’s take a closer look at specific categories to find out more.

Photos: Real or AI

The survey included stock photos, photos modified with FaceApp, and images generated by sites such as ThisPersonDoesNotExist (and ThisCatDoesNotExist).

Would you like to give it a try?

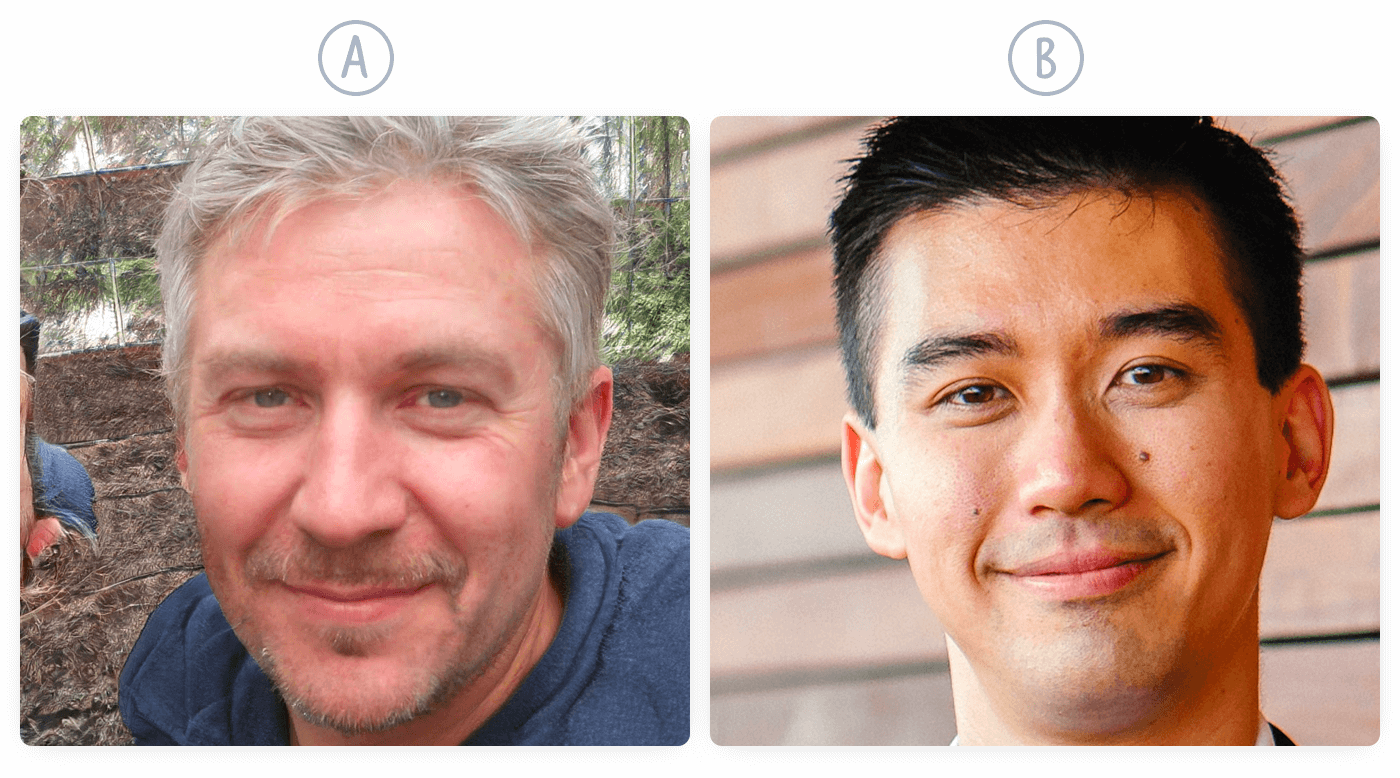

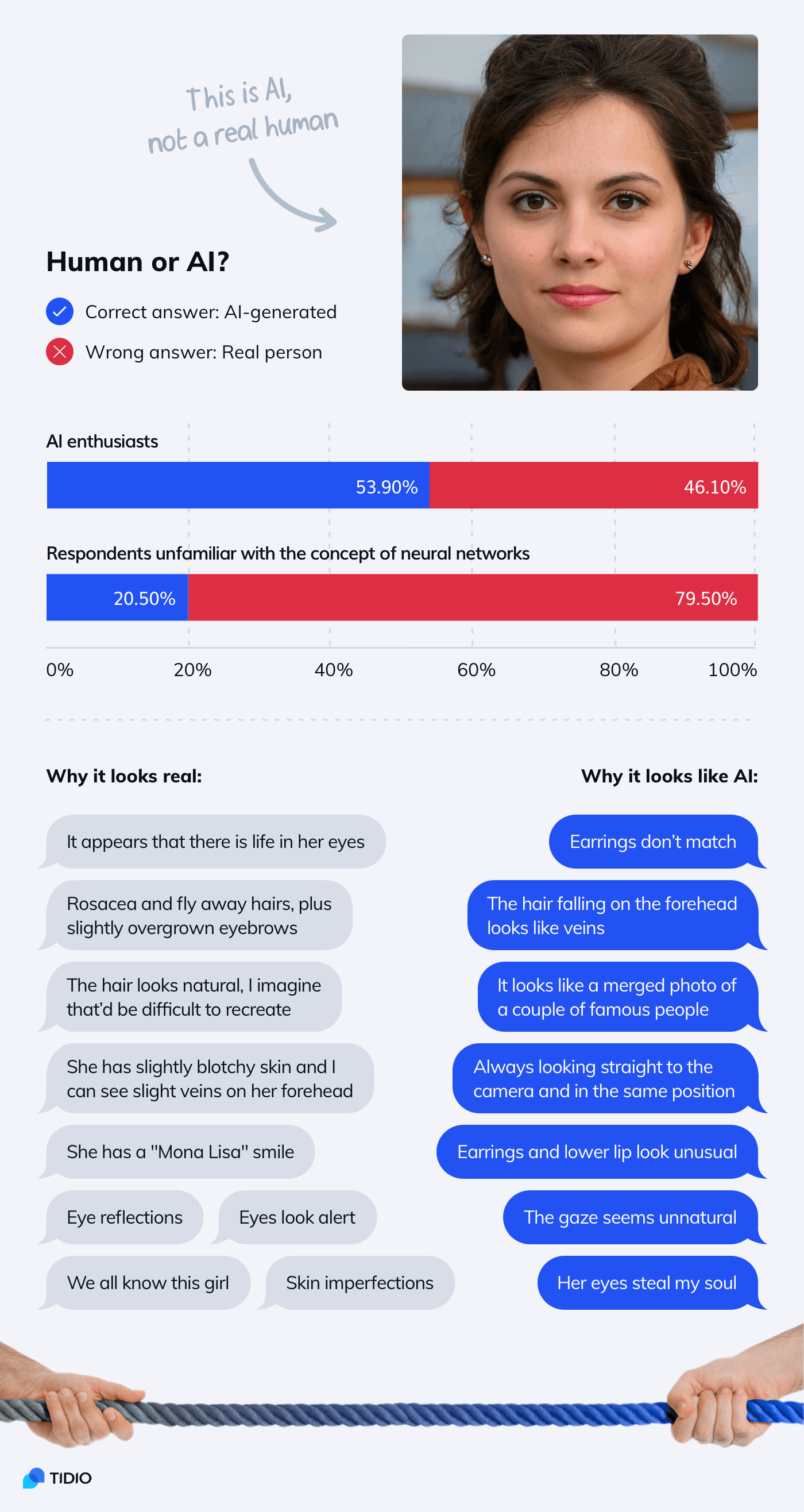

Here are two faces. One of them is a real photo and the other is an AI-generated image. Can you tell which is which?

What do you think?

- A is a real photo and B is AI-generated

- B is a real photo and A is AI-generated

Click here to see if you were right.

These two images were not part of our survey. However, they should give you an idea about the nature of our questions.

We found something quite disturbing.

While the example generated with an old version of StyleGAN tricked only 35.7% of our responders, the one created recently by StyleGAN2 convinced 68.3% of them.

Let’s take a closer look at it:

The responders interested in AI were able to tell that the photo was not real and this person did not exist. The rest was convinced that it was a human.

As you can see, a great deal of the arguments on both sides is very subjective.

More than 23% of the respondents who gave the correct answer stated that it “looks unnatural.” Many people simply go with their gut feeling. However, it can be deceptive and it works both ways.

Why?

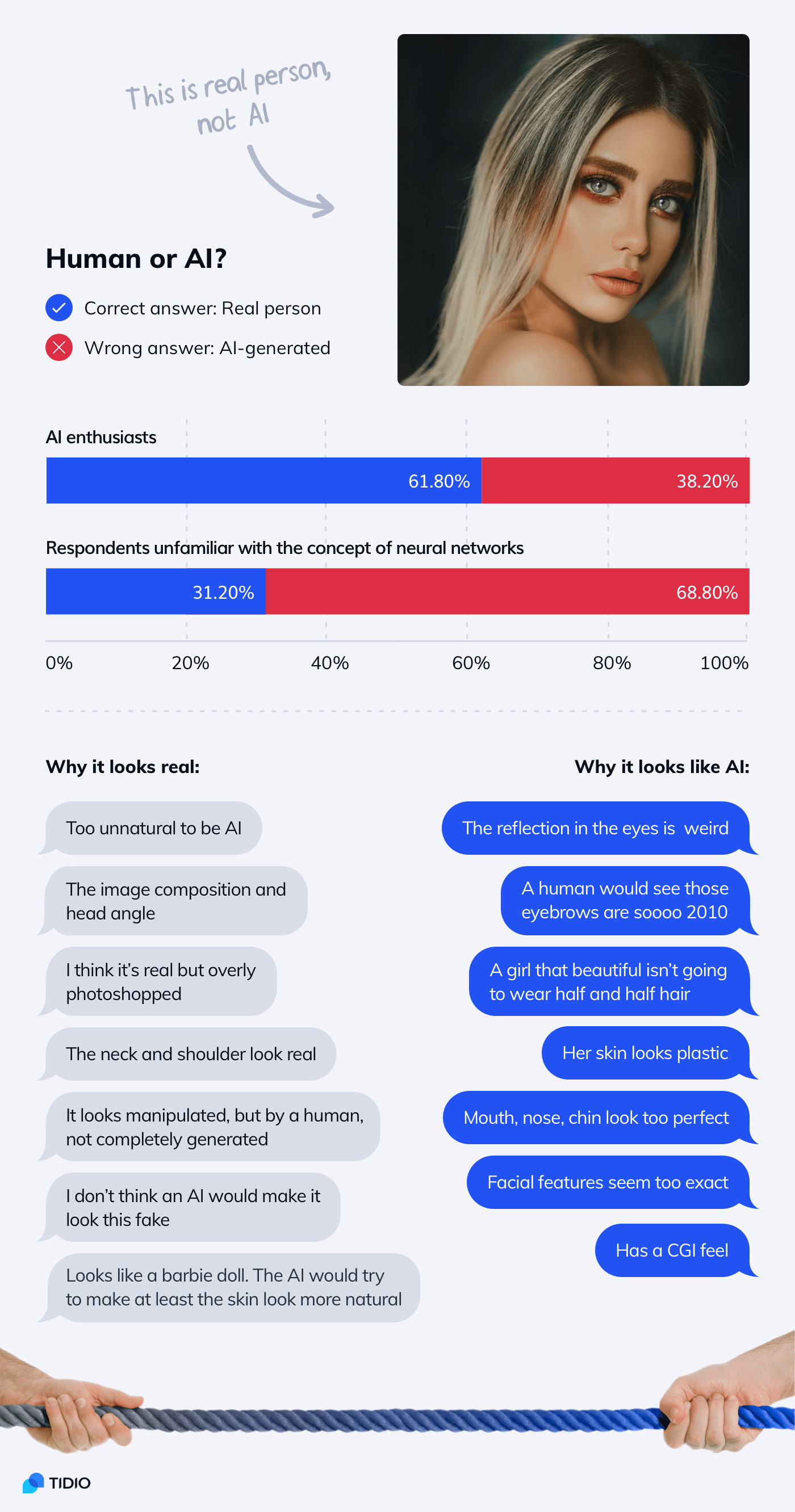

Here is the opposite case—a photo mistaken for an image generated by AI.

Almost 72% of the ones who believed this photo to be generated by AI thought that it “looks unnatural.”

As you can see, there are different kinds of “unnaturalness.” Some of them are AI- and others human-specific. AI faces as such can be very convincing. But there are some things that should give you a hint.

How to spot the differences between real photos and AI:

- Photos that are heavily photoshopped were usually taken by humans—the AI algorithms are mostly trained with repositories of unedited photos

- AI struggles with replicating unique or unusual elements—a specific makeup pattern, earrings, clothes (or lack thereof), hair highlights, or accessories are a dead giveaway

- AI-generated faces are usually very symmetrical and both eyes are at the same level

- Real professional photos are sharper and have more details while amateur photos are noisier—most AI-generated photos are neither

- If a photo is cropped and doesn’t show shoulders, hands, or the whole hairdo, it could be AI-generated

- Skin imperfections and eye reflections don’t make images authentic

- Sometimes AI-generated hair and teeth are messed up but it doesn’t have to always be the case

Paying attention to these details improves your odds at judging if images are created by humans or AI. But the technology improves very fast. You can never be 100% sure.

Artwork: Can AI Create Art?

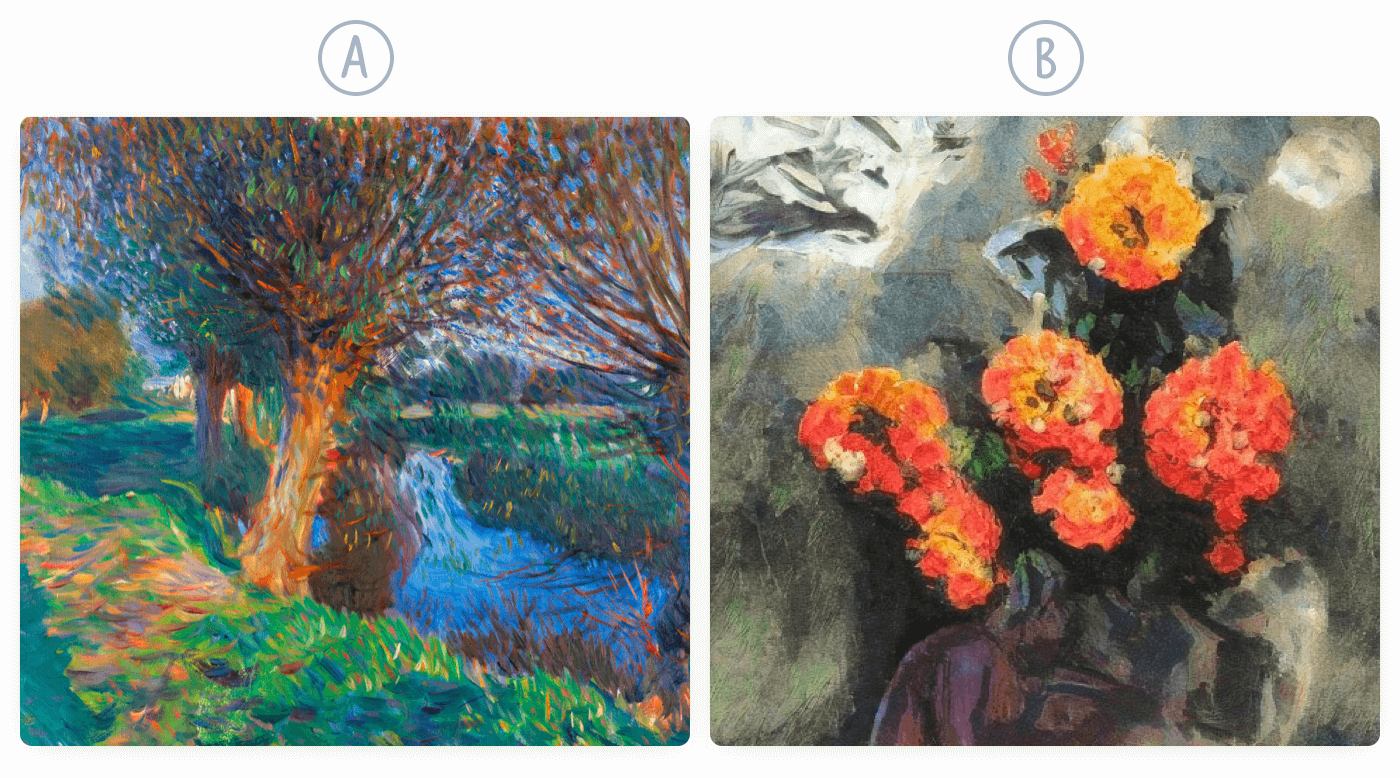

Once more, let’s start with a quiz question.

Can you tell which painting is real and which was generated with AI?

What do you think?

- A is a real painting and B is AI-generated

- B is a real painting and A is AI-generated

If you want to check the correct answer go here.

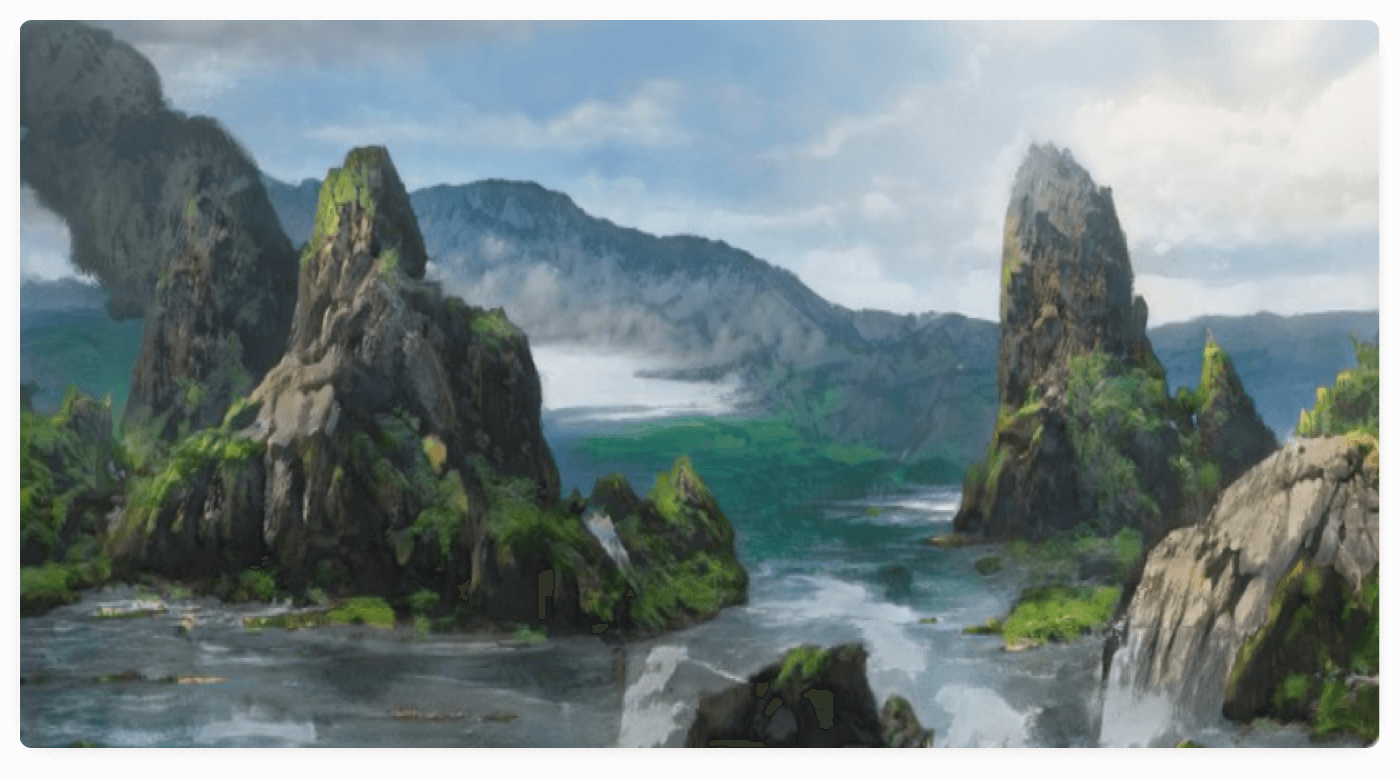

AI can conjure up amazing images in the blink of an eye. While most of these rarely end up in art galleries, there are some narrow areas where AI-generated contents work surprisingly well.

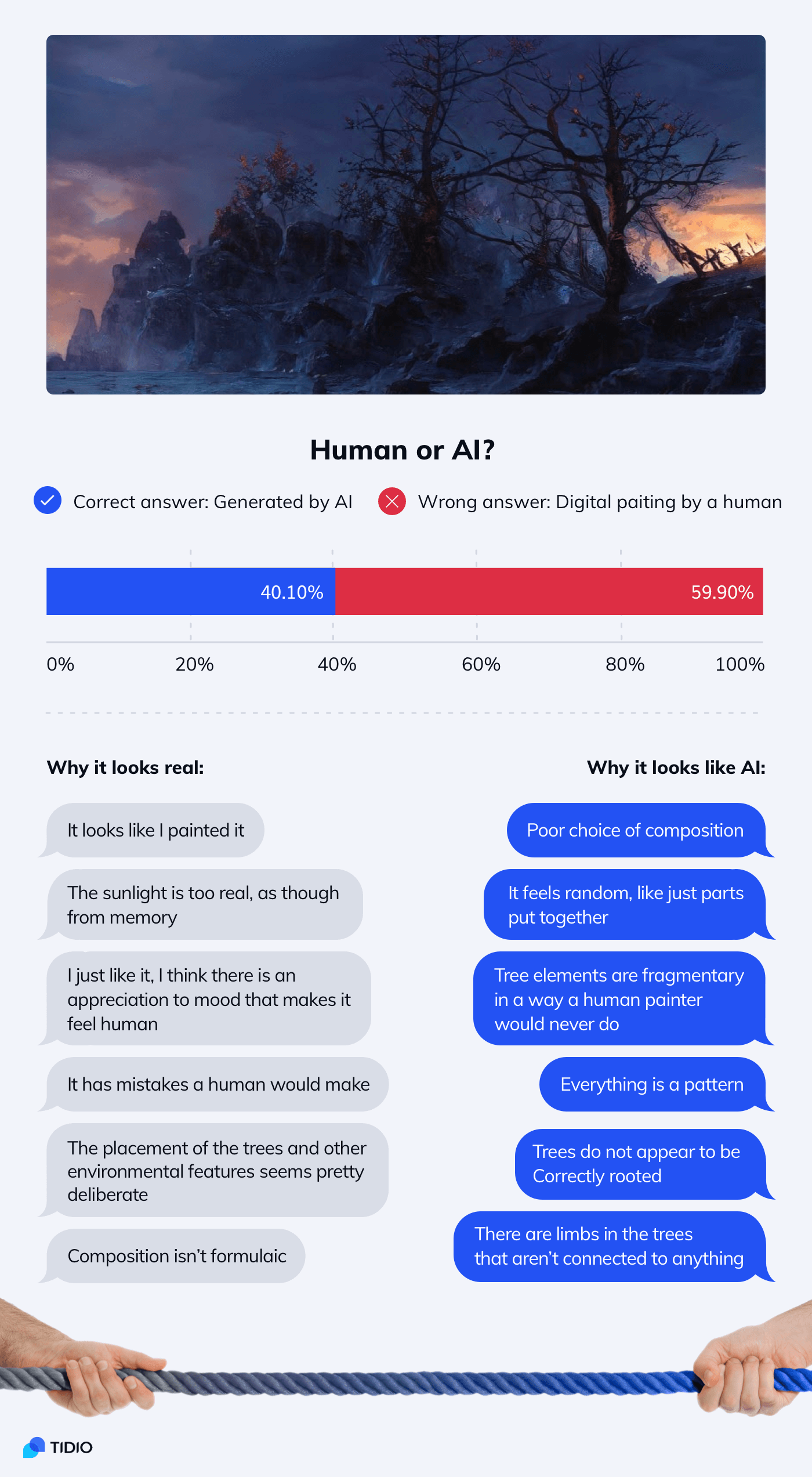

Here is an example of an AI-generated landscape that could be used as a background by a concept artist.

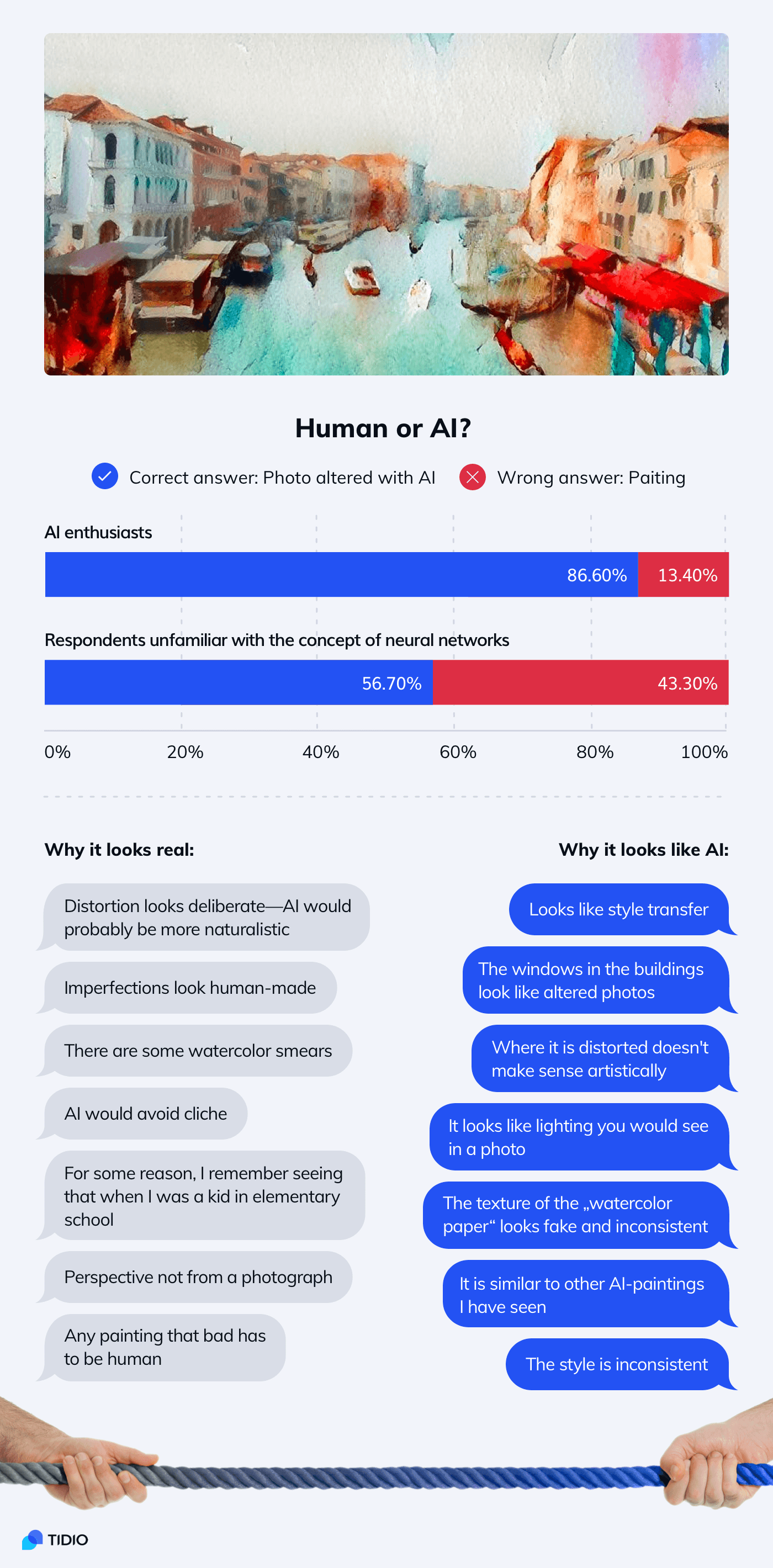

You can also very easily make a photo turn into an impressionistic painting. For the untrained eye, the effects are indistinguishable from the works of real painters.

In this example, both AI enthusiasts and the general survey respondents were equally wrong.

Many of the responders admitted that this artwork could be either and there is no way of deciding for sure. Almost 9% believed that this image is “beautiful”—a quality unlikely to be replicated by an algorithm.

The example above has been generated with ArtBreeder. We can use sliders for aspects such as the number of trees or weather and lighting conditions. By changing the values we can decide what we want our image to look like.

Apart from generating images from scratch, we can also create fake AI paintings by using filters on photos. Here is the original photo used for our experiment.

This technique didn’t convince the majority of the survey respondents. But their impressions are very interesting nonetheless.

Some respondents claimed that the picture was too bad or ugly. For some reason, AI is expected to create something more advanced. There are some opinions that suggest the image is uncreative exactly because it is human-made.

How can you tell if an artwork was created by a human or a machine?

- AI-generated art looks procedural—there are some visible swirl-like patterns that you can learn to recognize

- Artists apply more detail and definition when working on the most important element of their artwork

- AI processes everything indiscriminately

- With paintings that were digitized by means of photography, you can usually zoom in on details

- AI-generated artworks are usually low resolution and they lack detail

- Abstract, surreal, or landscape images may look convincing but AI can’t generate complex and realistic scenes with human figures

- StyleGAN can replicate brush strokes and textures but only in a very mechanical way that doesn’t follow the form of objects

Music: AI vs Human Composer Test

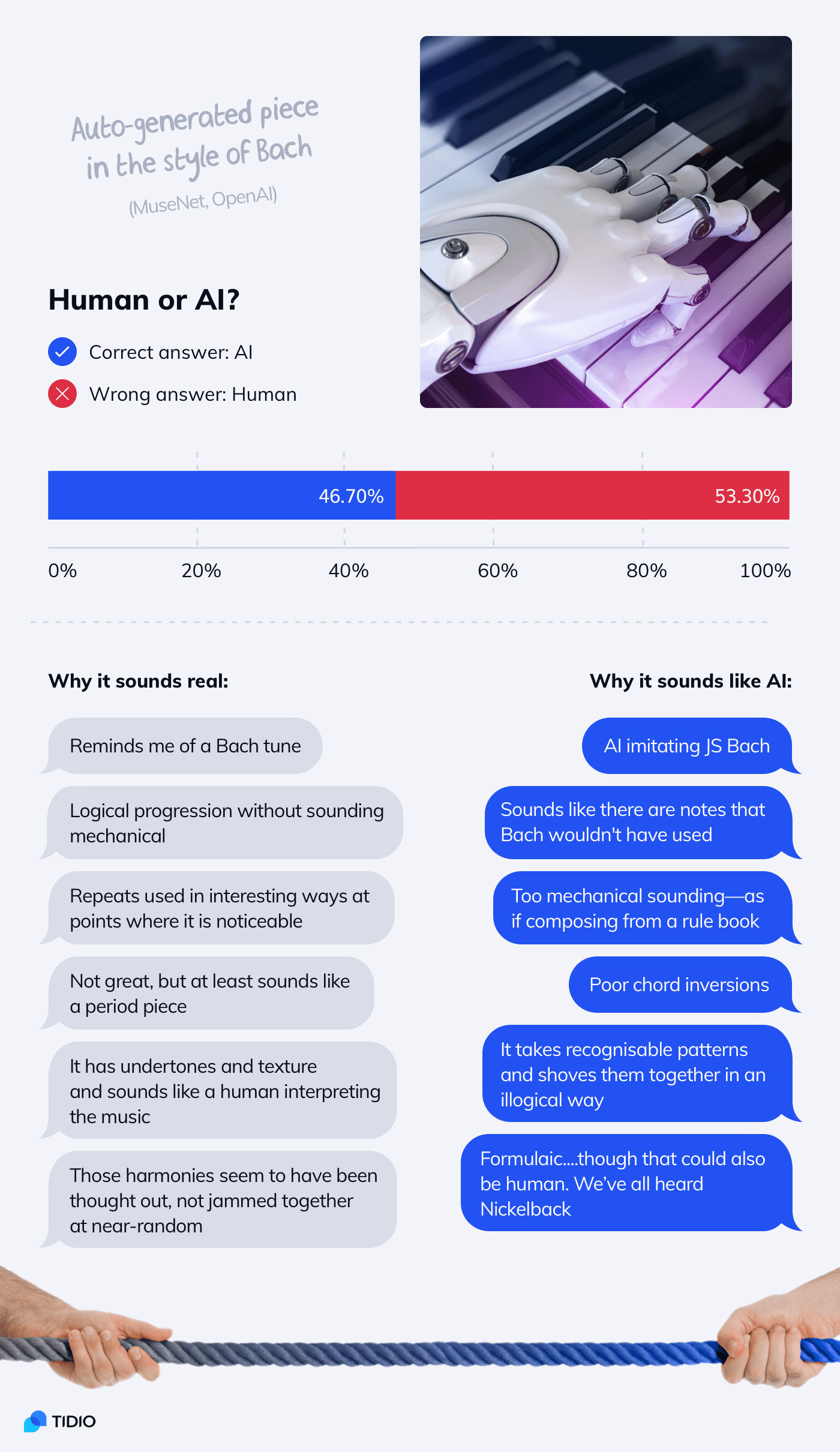

Most respondents felt that music was the most difficult of all the categories included in our AI test.

It is difficult to separate the aspect of composition and performance. It turned out to be very misleading. The results were to a large extent linked with expectations towards music.

An EDM track arranged by a human producer was identified as AI-generated by 71.4% of the respondents. On the other hand, a song created by AI (trained on the songs of the Beatles and performed by musicians) convinced 61.6% of people to be composed by a human.

The songs were perceived as too bad/chaotic for “smart” artificial intelligence or too good/complex for a human. The sense of human inferiority to AI capabilities is one of the recurring themes of the survey.

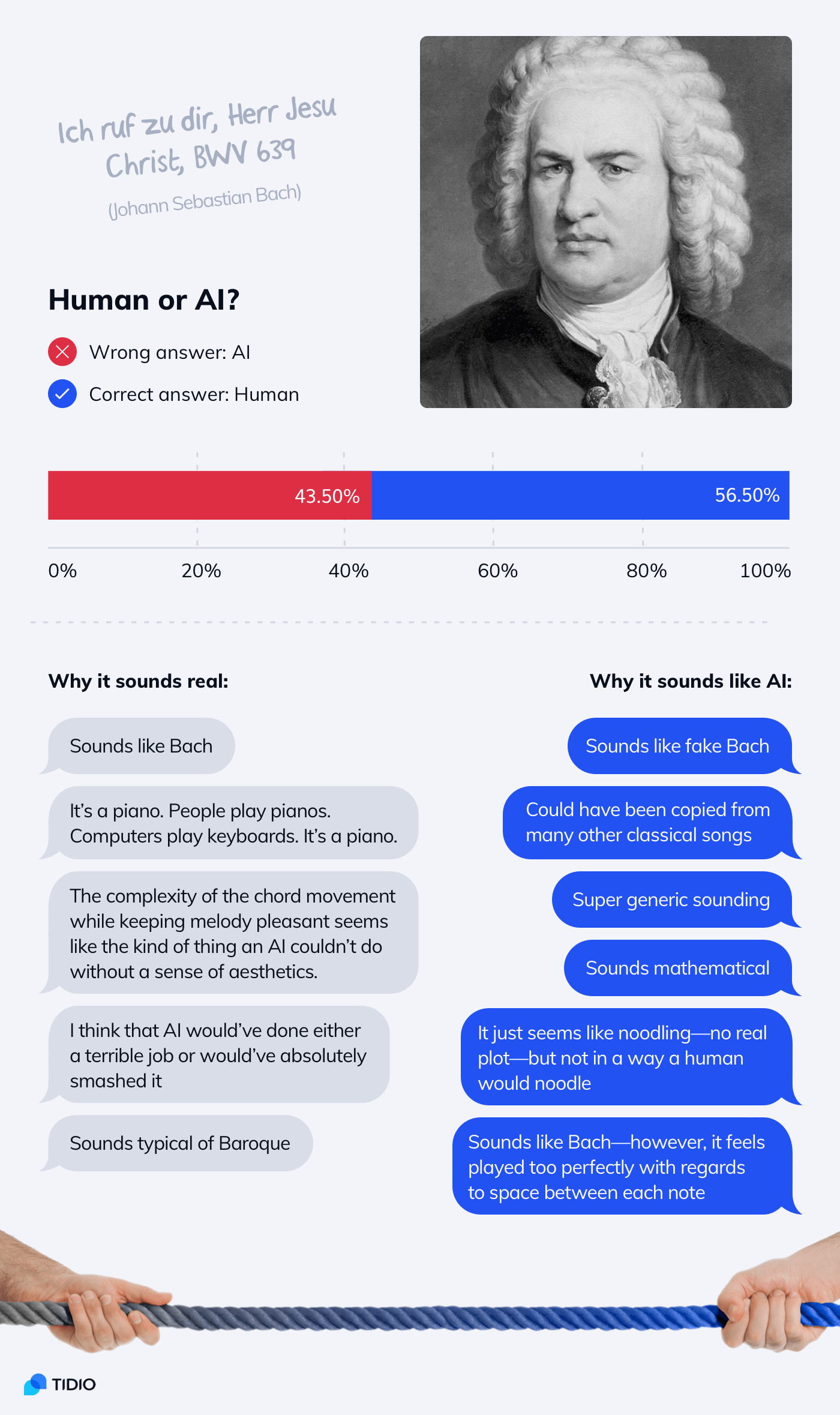

The two examples with “classical” music were quite similar to each other. They also did not contain too many elements that could be perceived as red herrings.

Let’s take a closer look at them.

Now—

Let’s take a quick look at the real McCoy.

One example threw the respondents off guard. It was a composition created by AI but performed by human musicians (“Daddy’s Car”). Almost 40% of them argued that it sounds like it was performed by a human (therefore it must have been composed by one too).

It suggests that musicians who use the support of AI for composing new songs and pieces can mask it with ease. There are many factors that make music sound “human-made” and the actual notes are only one of the elements.

So far, AI won’t create a Tony-worthy musical. But it can pass as a movie or video games soundtrack (that can be much cheaper and generated in minutes). Since many people can’t tell the difference, we can expect more AI-generated elevator music.

Texts: Can AI Write Articles & Translate?

Writing is in many ways similar to music. In music, we have a specific set of notes and an infinite number of ways to combine them. It’s the same with words and sentences.

Since both AI and humans use the same building blocks, sometimes recognizing AI is downright impossible.

Our survey respondents considered various clues. Sometimes they turned out to be accurate and sometimes not. For example, John Ormsby’s translation of “Don Quixote” from 1885 was identified as AI by almost 66% of the respondents. The text seemed illogical, awkward, and hard to follow.

Quite the opposite happened with the opening lines from “One Hundred Years of Solitude” translated with AI. It convinced 62% of people to be written by a human translator.

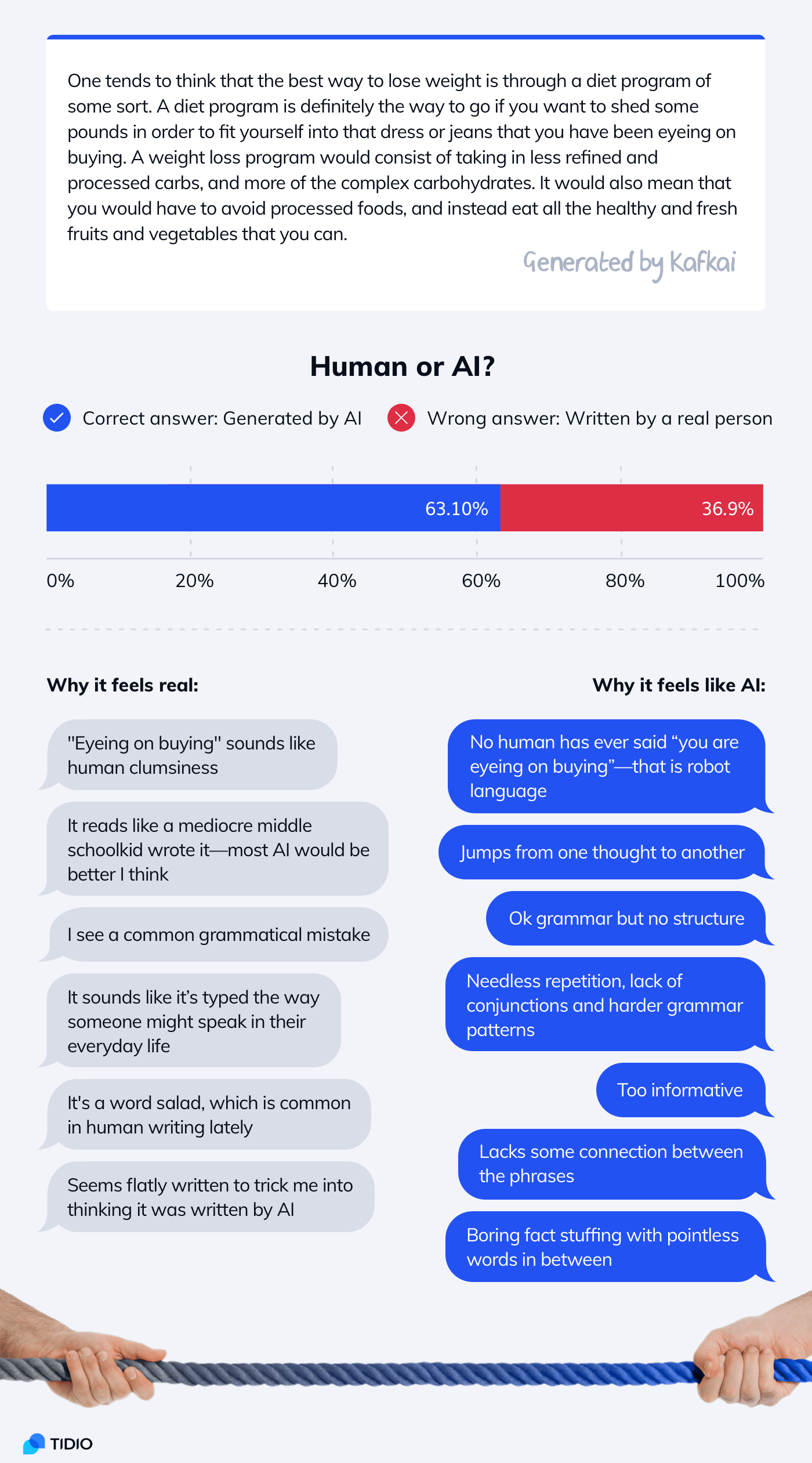

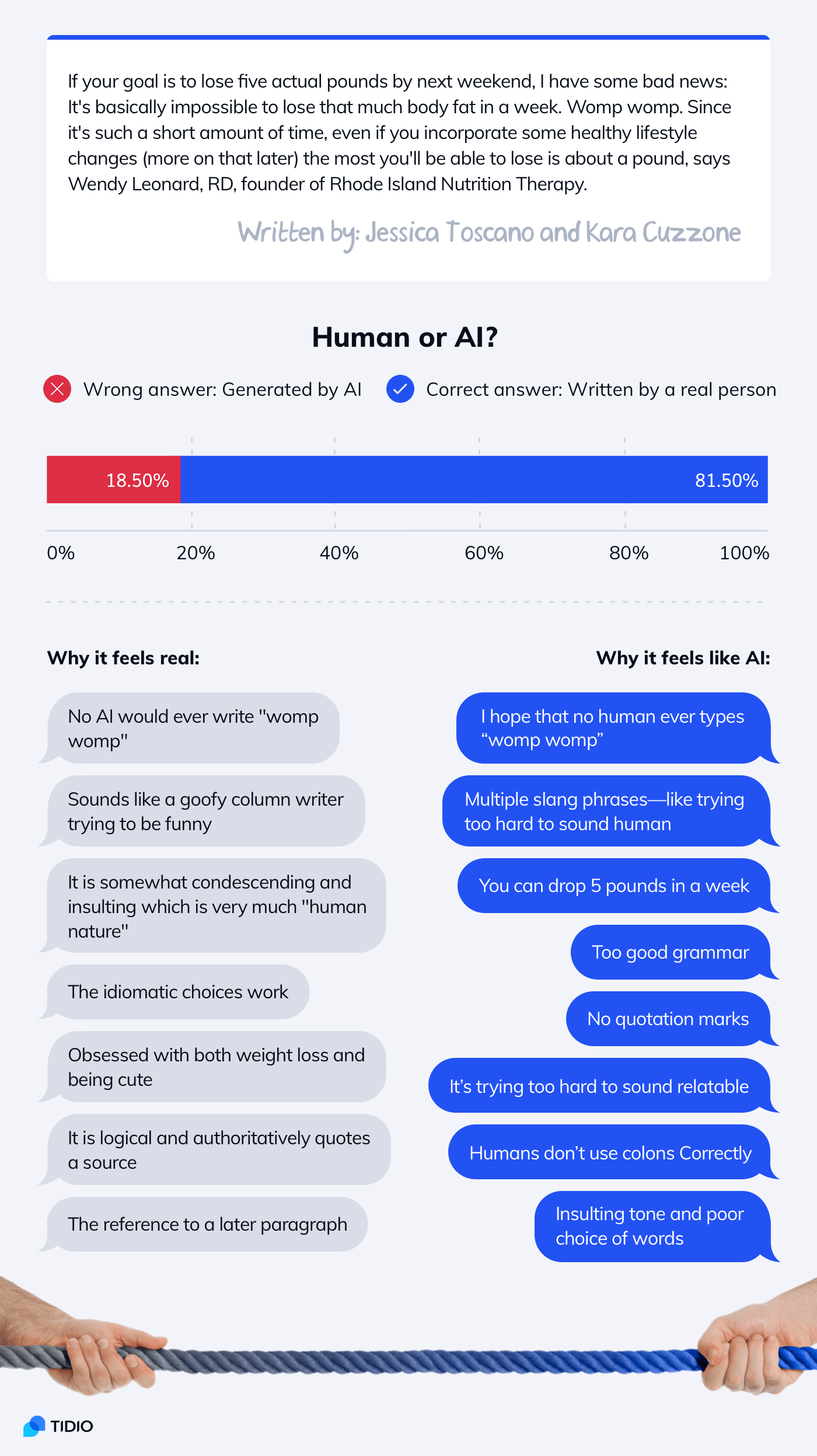

Most of the respondents were correct when judging contemporary lifestyle articles. Our AI test questions included one text written by a commercial AI and one by human journalists. Both articles were about weight loss.

About 81.5% of the respondents recognized the real fragment of an article published in Cosmopolitan as written by a real person. It makes it twice more human than an AI-generated text which convinced only 36.9% of them.

As you can see many elements appear as both arguments for and against the text having been written by AI.

Seems natural and well written—it is an attempt to trick me into selecting human!

Some respondents were getting quite obsessed with bluffs and double bluffs.

AI vs Human in 2022: Insights

One of our survey respondents said:

I learned today that I could be very easily tricked by an AI. I hope I never have to deal with that in a bad way.

With easy-to-use tools, anyone can generate a fake profile picture for social media. Or use a smartphone filter to become a beautiful biker-girl with thousands of fans on Twitter. In spite of being a fifty-year-old man in real life.

AI-based image manipulation, especially combined with Photoshop, can be a powerful tool of deception. Here is one more example from our AI test.

Almost 30% of the respondents thought that the third photo is the original. And they were wrong.

The correct answer was selected only by 20% of people who took our AI test.

Quite scary, isn’t it?

OK—

So what exactly are we dealing with? Will AI take over the world? Are human beings inferior to machine intelligence? Will our beloved celebrities be replaced with deepfakes?

Artificial Intelligence Timeline:

- 1950: Alan Turing, a computer scientist, creates his famous imitation game a.k.a. the Turing test

- 1966: Joseph Weizenbaum creates Eliza—a computer program that can have conversations (a chatbot)

- 1980: John Searle presents the Chinese room thought experiment and argues that computers cannot develop consciousness

- 1990: Hugh Loebner launches a competition in which artificial intelligence programs try to pass the Turing test

- 2001: Stevan Harnad refutes the Chinese room argument

- 2008: Eugene Goostman becomes the first chatbot to pass the imitation game (but still comes second and doesn’t win the Loebner Prize)

- 2014: Ian Goodfellow invents generative adversarial networks that revolutionize machine learning

It’s 2022.

And it seems that GANs, deep learning text generators, and AI-powered music software can fool most of us. Not all the time and not with enough context given. But still…

Let’s sum it all up.

Bad news:

- Short pieces of text and music created by humans and AI are often indistinguishable even for professionals in a given field

- An AI-generated photo from 2018 has been mistaken for a real person by 35.7% of respondents and the one from 2021 by 68.3%—nobody knows what the 2025 results will be

- It is extremely difficult to tell if some features (like hairstyle or gender) have been altered by AI apps, even if the photo resolution is way bigger than most avatars and profile photos

- AI text-to-image generators can create convincing, photorealistic images based on prompts and descriptions

- AI can be a powerful tool for spreading misinformation

Good news:

- AI is still more difficult to use than traditional techniques—if someone wants to deceive you, it is easier to steal real photos than to generate fake ones

- Gen Z respondents seem to have a higher level of visual literacy and are more likely to identify fake photos. If dangers related to AI manipulations were to become more common, it would be probably just a matter of raising awareness

- The AI does well at generating very specific elements with predictable structures (such as faces) but fails with more complex images

The last point is critical. Let me demonstrate.

AI! Create me a cat!

AI:

No! Bad AI! This is just a fake headshot photo of a cat. Show me a complete cat.

(Drumroll)

AI:

See what I mean?

Machines think—if we can call it that way—in a different way than human beings. If we train our AI with 100,000 cat faces, the results will look surprisingly good. The photos have a clear underlying pattern (eyes, ears, whiskers) that the AI can crack. But once there is a body (and countless unpredictable positions the cat body can take) the AI has no clue what’s going on.

The entire cat generated by AI looks like an abomination straight out of a horror movie.

AI can generate some tiny elements but right now only human writers, artists, musicians can put them together in a meaningful way.

Here are some AI-generated paintings:

Some of you may think that they are still better than the works of many contemporary artists. But they are just procedural patterns and random elements stitched together—look hypnotizing but lack structure. It is not a real human-like intelligence.

The art of composition and managing larger structures is still out of AI’s reach.

Moreover, AI is not something we should be afraid of. A world of AI-news presenters and AI-teachers does not seem that bad.

Unless you are a news presenter or a teacher of course.

Final Thoughts & Methodology

We are quite used to people taking our chatbots as real live chat operators. We kind of assumed that some of the people taking the survey would also have trouble telling the difference between human and AI-generated content.

Still, we’re blown away by the results.

Originally, we published the survey on Amazon Mechanical Turk. We got about 600 responses. The demographic composition of MTurk workers is very accurate, which was very helpful. However, the scores were somewhat surprising.

We needed a larger sample size.

With the help of Reddit, we managed to get 1,200 additional responses.

Then the survey went viral. It was featured by Vice, which gave us more than 20,000 (!!!) extra responses. And the numbers are still growing.

The survey appeared in the tech news section—most of the respondents were people interested in technology. Both Vice readers and Redditors are more tech savvy than an average person.

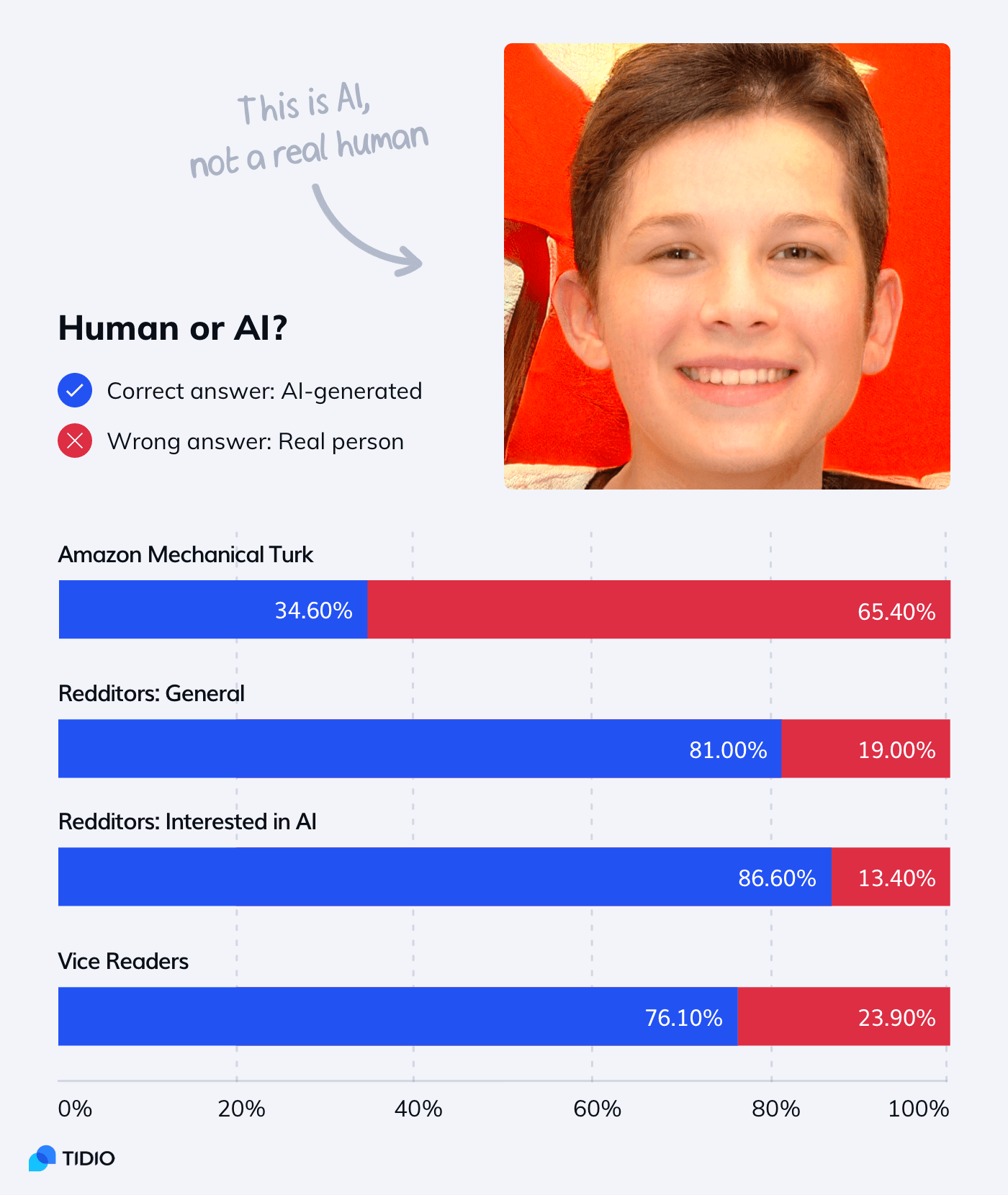

Here is an example of how the results differed between the segments:

In most examples, however, the differences weren’t so drastic. We decided to base the report on our initial sample of 1,766 respondents. The differences between responses from Vice and the general Reddit sample were less than 5% in almost all cases.

We did use all responses in one of the main infographics and the score chart.

Once we collect and analyze more data, an extended PDF version of the report will be published. If you want to receive it via email, click here.

Correct Answers

Here are the right answers to the AI test. There are 18 questions but only 17 points because one of the questions is an attention check.

- Generated by AI

- Real photo

- B

- AI-generated

- AI

- Generated by AI

- Human

- Real photo

- Human (attention check)

- Painting

- E

- Generated by AI

- AI

- Human

- AI

- Human

- Human

- AI

And here are the answers to the questions from this article:

- B is a real photo and A is AI-generated

- A is a real painting and B is AI-generated

Did you get them right?

Sources

Here is the list of tools and resources that we used to prepare our AI test: